I recently had the opportunity to join a Brisbane organisation as a Developer Lead. A fast paced organisation running a large user base with a reasonable high volume of financial transactions. As is often the case with these things, you don’t really appreciate how much was going on until you sit back and think about it later. I thought it was worth sharing some of this as many of the topics, techniques, scenarios and challenges often raise their heads in interviews and on other software support and development sites.

The Challenge

The company I worked for employed nearly 100 software developers on a single floor. The business front-end public website would at its peak events have 40,000+ users online concurrently and we were receiving upwards of 6-7,000 financial transactions per minute at key events. Every year, they collected many hundreds of millions dollars worth of credit card deposits. Aside from when the site was down, there was never a minute day or night when we were not processing transactions. Needless to say, this site could NEVER be down. If it was, it would cost the business tens of thousands of dollars per minute in lost revenue.

The site was built on a vast range of software technologies and languages both old and new ranging from older Meteor, JQuery, PHP 5 technologies through to NodeJs and iOS and newer technologies like React and Golang in a micro services architecture orchestrated with Kubernetes and Docker in our AWS cloud. As everyone who have worked in these kind of environments know, the mix of old and new technologies is a common and often inevitable legacy build-up in an application that is extraordinarily successful and evolving hyper-rapidly with a large user base.

The development floor was broken into product feature development groups with 6-8 developers in each. My team was the BAU team (Business As Usual), about 8-9 guys in the end, responsible for support, bug fixes, minor features and keeping the lights on the show 24/7/365.

Our main immediate stakeholders were internal customer support staff online 24/7 dealing with countless customers calling in. Needless to say, I needed to develop a team that was highly agile, able to work under considerable pressure, skilled across a full stack of varied technologies and software languages… oh, and available day and night.

In the end, the team that we grew was awesome with an amazing range of skills, funny to be with and calm in the face of not-infrequent site disruptions and panic-stricken customer support staff facing screaming customers.

Hiring the team

My approach to hiring for the team for this company was to look at the developer as a person first and developer skills second. They needed to be able to not only survive the gruelling early am support calls and be reasonably outgoing but needed to be fire-fighters meaning people that thrive under pressure and get their gratification from solving urgent high-priority problems quickly.

The interview process was simple.

- Review their resumes and weed out the bullshitters. You get an eye for this. As a Dev Lead hiring developers you will get about a billion recruiters contact you a day. It quickly becomes obvious that some are better than others in how they communicate, how much candidate groundwork they do and the quality of resumes that they deliver.

- I maintained a record of people and resumes that we had seen and we saw many resumes and did many interviews. Some developers resurfaced repeatedly. Keeping notes and comments for each developer that had applied was immensely helpful. It is amazing how quickly you forget details and what your impressions were on an interviewee. Stating the obvious i guess.

- In the interview, we were casual with one HR person and one tech guy (me). It was more of a conversation than a hard and fast interview. Don’t get me wrong. We were not lying around in bean bags sipping Macchiatos. Our aim was to get people to relax and talk freely about their past experience, how wide was their technology exposure, how much first response support had they done and watch to see if they started to twitch when we said we had a 24/7 roster.

- We did get them to do code tests but not while staring over their shoulders scrutinising their code style. The test was super simple with just basic guidelines of expectation. I encouraged people to come up with a simple, clean solution to a basic site where they put their best foot forward showing good structure, code style and delivered a well documented product that I could spin up and have a look at. We did not put a time limit on the test but suggested it should not take more than 4 hours. This worked well and we hired great skill on the back of that. Having said that, it was amazing how many people had not taken the time to do basic structuring, code styling and sometimes even plagiarised whole code sets from GitHub.

This process proved to be a consistently beneficial hiring process as mostly the people that we hired stayed for the duration, flourished and became great members of the team.

Good on-boarding documentation, tools and processes for bringing a new developer on to the floor was really important. Having a structured way of ensuring that the developer gets a feel for the whole picture is critical for getting them productive and comfortable in their new environment. Being employed by a company with 100+ developer peers can be intimidating in terms of how your skills match the rest of the people and how everything works even if you are a senior developer. A developer coming into that environment is often self-siloed as they don’t want to appear stupid. Submitting your code for review by two other developers on the floor can be confronting. We recognised and dealt with this as much as we could through frequent one-on-one communication coupled with open discussions in stand ups.

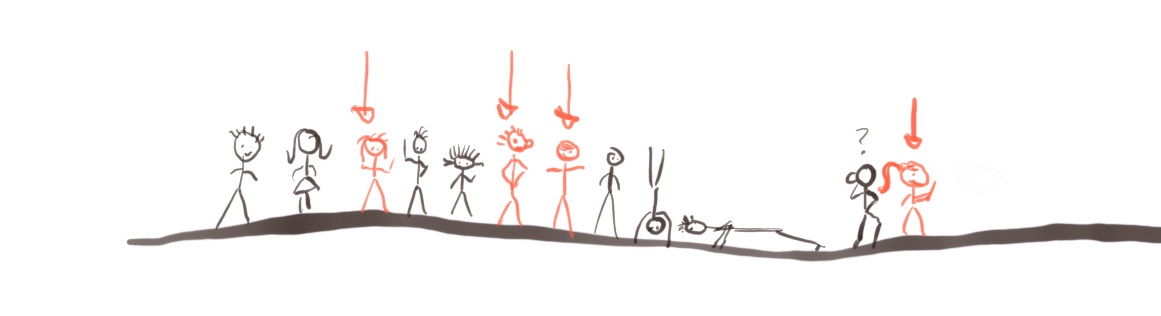

Stakeholders, Tickets and Triaging

We used Jira and their Kanban board to manage our incoming tickets. Prior to coming into big events for the business, BAU would receive around 200+ tickets a month. Perhaps doesn’t sound like much but it kept us busy. These tickets would range from 2 hour fixes to 4-5 day feature improvements. We had 8-9 developers in the BAU team and we were able to get through an average of around 150-170 tickets.

With this many incoming tickets, clearly priorities and triaging would be a challenge. To mitigate this, I had meetings with internal stakeholder department managers once every two weeks and got them to participate in the triaging of their tickets. This worked really well as they got to decide themselves in which order we needed to address the tickets. People easily get frustrated when they don’t see their problems fixed and don’t understand why it is taking so long. These meetings helped relieve this and created great, dynamic working relationships with the rest of the business. Also created a weekly Confluence BAU snapshot where I outlined the various big/serious issues BAU had addressed the previous week, how many tickets we had processed, status of key tickets, number of after-hours calls and what we were focusing on in the coming week.

As we had a fair number of tickets come through in a week and a number of them P1 issues, this role was largely hands-off for me. It was clear that I could manage any number of concurrent P1/P2 issues if I delegated but much less if I went back on the tools. Depending on ticket volume, focusing on designing/debugging/developing software does not always go well with managing people and stake holders. One takes concentration and silo space while the other requires constant communication. They often don’t mix.

Within the team, we had daily 15 minute stand ups to hear what others were working on. While all team members were diverse in the ability to work in various areas of the code, you get a feel for what type of technology people excel in. Important knowledge when you get a screaming urgent issue come along and you need to delegate it for fast resolution.

We all agreed that any tickets older than 6 months that had not yet been addressed should be closed. Lots of debate around this but in the end, there is no point with having tickets lying around that are not important enough for you to allocate resources to or hire more people for. Just close them and remove unnecessary noise.

Reviews, Rewards and KPIs

The business had in the past considered a KPI system to reward people for hitting milestones. We had discussions around this and recognised early on that this would not work in our environment as the goal posts were constantly moving and it was impossible to set down targets for a 2-3 month period, never mind 12 month KPI reviews. To the developer, these would become more a de-motivation than a sense of achievement as there was little chance of hitting your KPI targets. I think this is true for most IT companies.

In an effort to give more effective recognition and keep people motiviated, we suggested to the HR department that we would do more frequent salary reviews. The company would as a rule give out salary increase annually based on the recommendations from team leads and managers. I suggested that they change that to quarterly reviews to give a more frequent recognition. No difference in money overall but huge difference to the developer putting in a huge number of hours in getting more frequent recognition.

Further recognition was coupled with communication in that I tried as much as possible to be aware of the type of work individual developers prefer. I am not saying that we were treating devs like princesses and only give them the good stuff but be aware of what they are interested in and what gratifies them and ensure that you delegate a reasonable proportion of this type of work to them when you can. Some developers are fire fighters and get their kick from quickly achieving results and others enjoy designing and developing features in new technologies. You would have thought everyone likes playing with new toys but it is not true. Some want the instant gratification that comes with technical support.

Discontent is always a tricky one and I think it again comes down to communication. You need to have a direct, relaxed conversation with the person to establish root cause. I had a few of those and it was about deciding if the discontent was well founded or baseless. I tried to accomodate and make reasonable changes to improve the environment and type of work. Most of the time this worked but very occasionally it was a lost cause and we had to move them on.

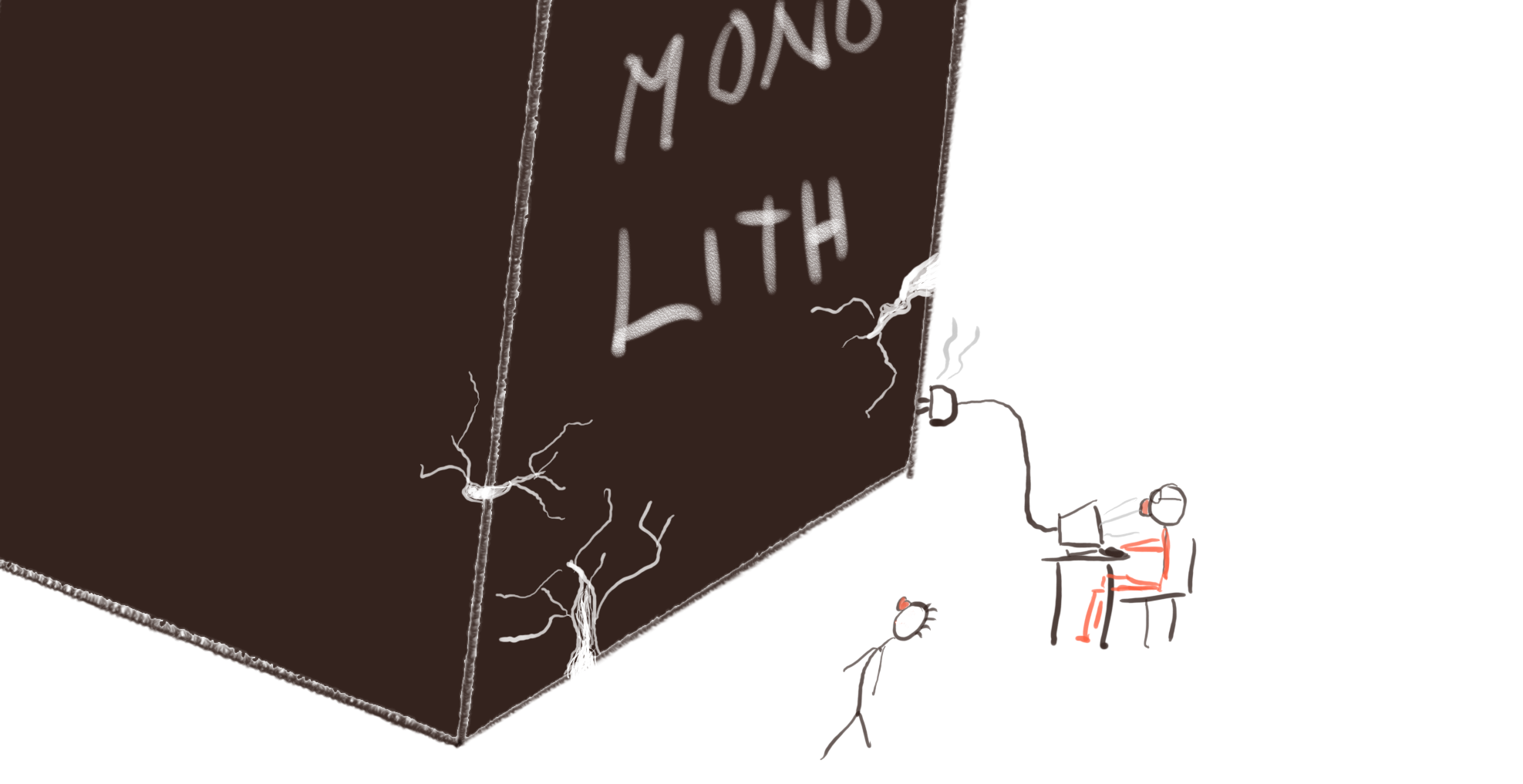

Technical Debt and building Monoliths

Probably the last thing that your CEO wants to hear about is technical deb. Unfortunately legacy systems, application monoliths and technical debt build quickly especially if you have a highly successful application and the business is growing rapidly. The debt must be addressed though. If you don’t, your company will find itself hiring more and more resources but not really getting anywhere and it will start to take much longer to get people on-boarded as the code base has become increasingly quirky and unwieldy. Eventually, your developer retention will start to drop because it’s a mess to work with and no real improvement on the horizon. Added to that, the business is becomes increasingly frustrated as it is unable to create new features, the product starts to become unstable and difficult to keep operational.

We were fortunate in that our CEO and CTO did recognise the need to rebuild and worked on making accommodations for it but it was not an easy road. It was a large code set that could not have any disruption. The approach this business started towards was to implement a gateway api which the legacy api behind it. They could then start replacing big problem areas in the legacy code with minimal disruption to the application. All new api code, developed in modern technologies, slotted into the gateway api and the application gradually could improved and stabilise with minimal disruption. It was a good plan.

You Build it, you support it

Eventually it becomes clear that it was not sustainable to have a small number of developers do the full support of all product delivered by a hundred developers. In a larger environment, a single team just cannot keep up as the product teams pours out product features in ever increasing range of technology platforms. We started talking about bringing support for features into the team that built it rather than have a BAU team that looked after all 24/7 support. You build it, you support it! It is not a bad idea. Arguably, the team that did build the feature are in the best place to support it as they are most familiar with the code set, the technology it is built in and therefore in the best possible position to provide the fastest and most accurate solution to the problem.

Inevitably, as more and more features are built within a team that is supporting its own product, the team will eventually spend more time supporting and have less bandwidth for new features. When the team get to the point of doing more support than new product/feature development, consider breaking the team into two teams. Split the support for existing features between the two teams to retain expert support for all features and backfill the roles to eventually have two full product teams.

The ‘support your own product’ thing was a highly contentious topic on the floor. While most of the developers in all teams put in astonishing number of hours to complete the product, very few had any interest in being available in a support roster outside of ours even with after-hours bonuses and RDOs. This concept never got off the ground.

Summary

Overall I found that to ensure good and frequent communication was the most important thing. Sounds obvious but it isn’t always when you are under the pump. Good communication with your team to ensure everyone is on the same page, working on relevant tickets, not too stressed and not stuck. Keep up the chats with your peers in other departments even if it is just around the coffee machine to ensure there aren’t overlaps so that you have a sense of what is going on across the other teams. Equally, good and frequent communication with your stakeholders is critical to ensure they are aware of the tickets you are working and that critical technical support issues are being addressed and suitably resourced.

Of course, the job is always easier being surrounded by a bunch of skilled and helpful people.

About

I have worked hands-on/hands-off in software development management, project delivery manager, as a solution architect and software developer nationally and internationally as well as in remote, virtual teams around the world. More info in my profile: https://www.linkedin.com/in/matz-persson-328b854/